Books and Film 2025

After slacking off all year, I did a bulk update update of the Books and Film pages for 2025—and a few from 2026. Book highlights: Moonbound and a le Carré retrospective. On film (it was mostly a late rush of TV) One Battle After Another was a cinema highlight, and Severance S2 the TV star. Also reorganised the pages to be more visual: so many great book covers and movie posters out there.

2026-01-31

Books and Film 2024

Annual update of the Books and Film pages for 2024. Once again failing to keep records during the year, but hopefully close to complete (though I’m sure I’ve missed some reading). Book highlights include The Book of Love and Orbital, while on film Severance, Dune Part Two and Call Me By Your Name were excellent. Utter failure on TV/film viewing given the number of strong recommendations out there—my watch lists grow ever longer.

2024-12-31

Books and Film 2023

Updated the Books and Film pages for 2023 (and some very late 2022 entries). Necessarily incomplete as memory is a fragile thing. Book highlights include Between Two Fires, Klara and the Sun, and Cold Enough for Snow (plus a reread of Gideon and Harrow), while on the film side Barbie, The Lobster, Joker, and Maestro all stood out.

2023-12-31

A Moderately Expensive Very Nice Monitor

It’s been a long time coming, but I finally purchased a unicorn monitor: 4K, 144Hz, 32".

After reading a few positive reviews, I picked just about the first model that came on the market in Australia, the Gigabyte Aorus FI32U. Luckily enough it was also more affordable that expected, coming in at AU$1500ish, which is less than I expected (and less than the ~$2k price for models released not long afterwards).

It’s still expensive, obviously, but given it’s something thing I stare at for hours on end, and it will be for years, it seems a fair price to pay.

As usual as soon as I got it I forgot all about the specs, endless research, flaws, etc, and just started using it. I’ve had it a few months now and it’s lovely - having crisp text is very rewarding, reminding me of the beauty of retina Macbook screens.

There are some caveats - mainly my GPU being underpowered (it’s a GTX1070) so it can’t run 144Hz without some dodgy colour banding (120Hz is fine) - but I’m very happy with it. Next is a GPU to power it when gaming, but as everyone knows they are absurdly expensive and not getting cheaper anytime soon.

2022-01-04

Observing anonymity

The Observatory of Anonymity is a fascinating tool from Imperial College London that demonstrates just how much of a smokescreen anonymised datasets can be. The tool asks you to choose various demographic sets, displaying how each choice narrows down the chance of being identified.

Australia isn’t included, but if I lived in London my choices led from a 1 in 70 million chance all the way down to 1 in 326. Which is still enough to be anonymous apparently, but far more select - and I’m the definition of a generic person. Any slightly less average choice makes it far more precise.

As Cory Doctorow observes, the problem becomes particularly acute when two datasets are available, which allows cross-checking and a vastly increased ability to target individuals, despite the supposed anonymisation.

2021-04-25

Pizza Toast & Coffee

Craig Mod, walker, photographer, philosopher, and now videographer, has produced a lovely short film on the Japanese tradition of pizza toast & coffee, or kissaten.

Many years ago I toured a tiny section of Japan with my mum, and one morning we were desperate for a change from the breakfasts of fish and miso. We wandered the streets and daringly (for us - we had no language) ventured into a small cafe that promised toast.

It was wonderful - not pizza toast, like Craig, but a wedge of just as thick, white, paper-light bread, toasted to perfection, and smothered in butter. It was also the first cup of coffee I’d ever enjoyed, largely because it was served with lashings of cream, making it more like a bowl of molten dark chocolate than coffee. Now I drink double-shot ristretto piccolos, which I guess is progress?

Craig is a bit of a wonder. He writes beautifully on his experiences walking and living in Japan, the craft of bookmaking, and the challenges and joys of running a membership program that funds all this creativity. His attention to detail on all his projects is inspiring, as evidenced in the pizza video - the sound, editing, and typeface choices are all perfect.

Worth following on any platform he turns his hand to.

2021-03-29

Posting to Micro.blog

After seeing Colin Devroe respond to my post about his photo process, I realised that there was no good way for people to interact with this site. Not that I’m expecting much, but it helps to have a way to contact an author for corrections if nothing else.

Colin managed to dig out my dormant Twitter handle for this site (that I’d more or less forgotten about) to let me know there was a problem with the RSS feed - very kind of him to point it out, and now fixed hopefully.

As a result of this interaction, I started looking around for a way to automatically syndicate posts to Twitter again (which used to be easy with Wordpress). nicemachine is published using GitHub and Netlify, so it looked like this was going to be complicated.

Micro.blog to the rescue! As well as Twitter I thought I should finally push posts to my empty Micro.blog account too, which is incredibly easy - just add an RSS feed to your account and it’s done.

And as a nice bonus, the Micro.blog setup solved the Twitter problem too - it will cross-post anything coming in on the feed to Twitter too. I’ll be interested to see if the Twitter link is to the original post or to the Micro.blog feed - hopefully the former.

In any case, posts from here should now be turning up on both platforms. Hello if you found this in either!

2021-03-28

Tyler Hall’s Photo Management

On the topic of photo management, developer Tyler Hall is working1 on a Iris, a macOS photo management tool (‘the culmination of over a decade’s worth of thinking and experimenting’) with a great philosophy of power and privacy:

Iris is for people who care about their photos and videos and believe they’re worth safeguarding in a private, future-proof format that will outlive their grandchildren.

Iris is for family historians and archivists. As well as those who want a better, more robust, and dare I say nerdy way to manage an ever-growing photo and video library.

Iris is pragmatic and does not impose a certain workflow on you. Just point the app at your photo library on disk, and Iris will take it from there.

All sounds perfect for someone running their site on Hugo :-) Speaking of, the ability to export to a static site is a unique(?) and promising feature:

Iris can export a beautiful static website of your library, specific albums, or a custom search query. Then, just upload that to your own website.

Tyler’s blog is another marvel of well thought out posts that explain his (sometimes heated!) software frustrations and how he’s gone about solving them. He’s created a wealth of simple but elegant macOS utilities, often free, to enhance his workflows - the excellent looking TextBuddy being the most recent example.

He’s posted about his efforts to take control of his photo data in the past, and the quality of his existing work gives me high hopes for Iris. I don’t even own a Mac (waiting on the near-mythical 14" Mx Macbook) and I’m interested.

-

Or at least I hope he’s working on - there have been no updates for a few months. He does call it his ‘white whale’, so I guess we should expect slow but steady progress! ↩︎

2021-03-25

Colin Devroe’s Photo Management

Colin Devroe has posted his updated photo management workflow, a process that has taken him considerable effort to develop:

In 2018 I decided to set out on a sort of mission; I wanted to create a photo library that would be relatively future proof. Should I decide to use a different app or storage platform, I’d want to be able to do so easily rather than painfully.

Colin uses macOS, but the principles in the article apply to any platform.

His philosophy on keeping the photo metadata with the image file is exactly what I would want to do - protecting the metadata from a corrupt database or unexpected software changes, and allowing portability. I take the same approach to my ripped FLAC music library, which is gathering dust these days but still a nice backup for the days you want something the streaming services don’t have or have suddenly dropped.

I’d like to see an another post on lessons learnt and why not to do certain things. Also curious about the step where Colin fast-filters the keepers - is that just using Finder to flick through the images? There’s probably no need for anything more complex, which is a refreshing thought.

Also interesting that despite working on the workflow and tool selection for years, there’s still a missing piece: search on mobile.

I appreciate the effort people take to post things like this. Every post adds to the pool of knowledge to help avoid easily made but difficult to change mistakes, like Colin’s own findings when depending on Apple Photos as the main tool.

Dr Drang is one of the best examples of this kind of writing, using his posts to show the inner workings of his processes and why he chose certain paths over others - and as a reference site for his own memory.

2021-03-25

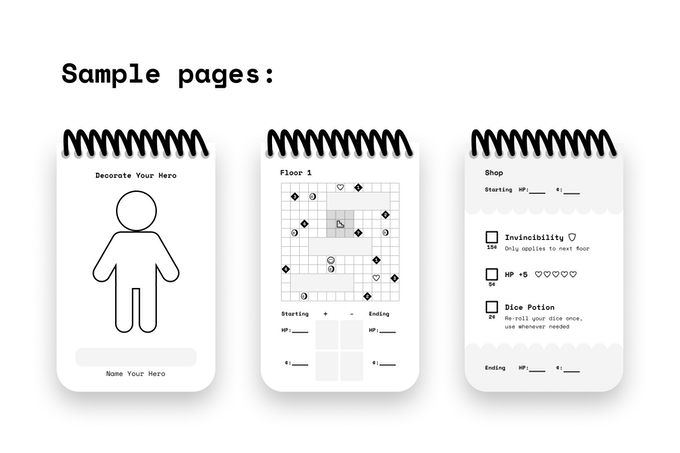

Paper dungeons

Tom Brinton’s Kickstarter for Paper Apps DUNGEON: a $5, procedurally-generated, paper dungeon RPG, nestled in a pocket-sized 50 page spiral notepad. Irresistible.

2021-01-18